Summarizing Production Server Failure Modes

Common server errors that I managed to find in kubernetes maillist (possible not very related to production OPs since many are asking beginner questions):

- Misconfiguration or mis-setup; version mismatch; software bug

- Service failed to start. service outputs error in log. service status fail. command outputs fail.

- Network down. Network unable to connected. Firewall issue.

- Process dies (especially the proxy process).

- Network or something misconfiguration.

- Process/service becomes non-responsive

- Anybody reporting disk degradation/corruption error?

Kubernetes has a HA doc, which happens to have summarized some common failure modes:

- VM(s) shutdown

- Network partition within cluster, or between cluster and users.

- Crashes in Kubernetes software

- Data loss or unavailability of persistent storage (e.g. GCE PD or AWS EBS volume).

- Operator error misconfigures kubernetes software or application software.

I also checked openstack-operator maillist for more failure modes

- Unexpected cpu/disk high usage.

- Dhcp down / unable to acquire ip address

- An operation (usually VM spawning) forever

Something in common linux failures

- Read-only file system error (i.e. FS corrupt, or no free space)

- Kernel panic

- Kernel softlockup / hardlockup

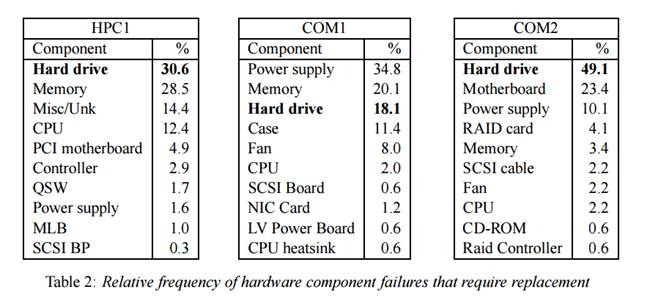

This paper gives a relative frequency chart of hardware failures that need replacement. 1 high-performance computing cluster (HP1) and 2 internet service providers (COM1, COM2)

Disk failures in the real world: What does an MTTF of 1,000,000 hours mean to you? shows that

- Disk failures exhibit significant levels of autocorrelation in time (failures follow failures in time)

RAIDShield: Characterizing, Monitoring, and Proactively Protecting Against Disk Failures reveals that

- Reallocated sectors correlates strongly with impending disk failures

- Many disks fail at a similar age

- Accumulation of sector errors contributes to the whole-disk failure, causing disk reliability to deteriorate continuously, and eventually fail shortly or suffer a larger burst of sector errors. (RS can be observed)

We can download public computer failure datasets at

Create an Issue or comment below